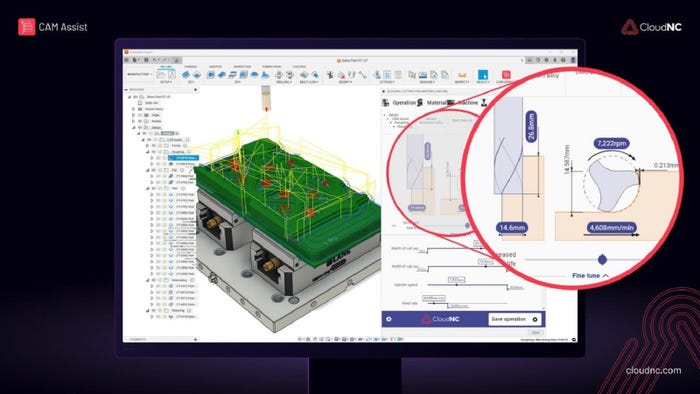

Design Software

Software Development Kits.jpg?width=700&auto=webp&quality=80&disable=upscale)

Design Engineering.jpg?width=700&auto=webp&quality=80&disable=upscale)

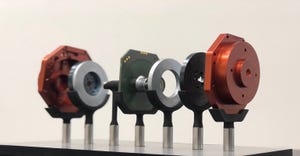

Component Technology (SDK) Is Powerful Fuel for DevelopersComponent Technology (SDK) Is Powerful Fuel for Developers

Developers can use component technology to create an endless array of products. SDKs can be leveraged across multiple sectors.

Sign up for the Design News Daily newsletter.

.jpg?width=300&auto=webp&quality=80&disable=upscale)