Motion Control

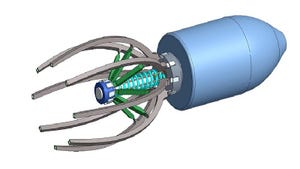

data-signal hybrid connectors

Industry

Green Energy Buffering Is Cleaning up in Supplier NewsGreen Energy Buffering Is Cleaning up in Supplier News

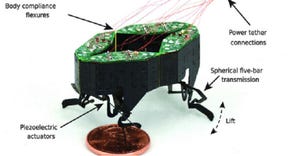

We’re also looking at data-signal hybrid connectors, pressure control valves, and high-speed actuators.

Sign up for the Design News Daily newsletter.

.jpg?width=300&auto=webp&quality=80&disable=upscale)