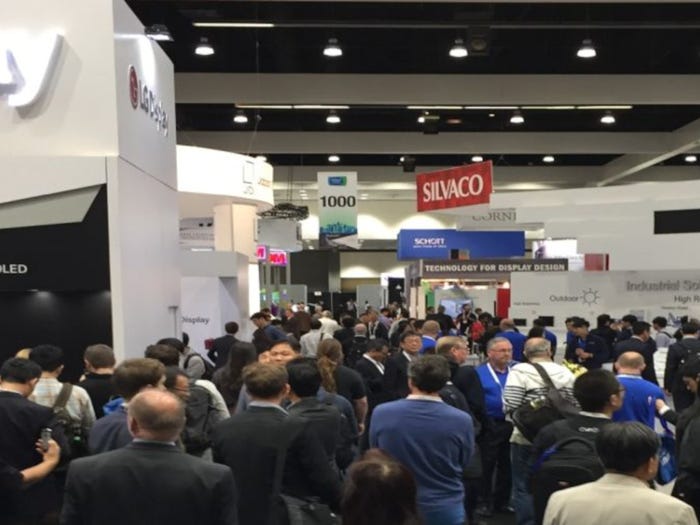

Display Week takes place May 12 through 18.

Electronics

Display Week a Treat for Visual SensesDisplay Week a Treat for Visual Senses

Whether physical or virtual reality, display technology to dazzle in San Jose.

Sign up for the Design News Daily newsletter.

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)