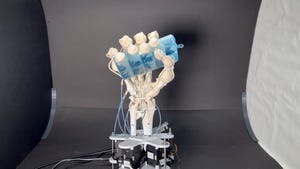

Robotics

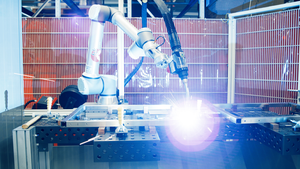

Future of mechanical engineering

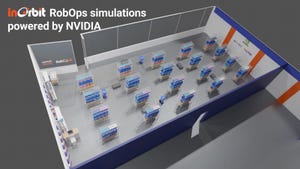

Automation

Mechanical Engineers Face a Changing FutureMechanical Engineers Face a Changing Future

The soft skills of problem-solving and communication will be needed, while AI, additive manufacturing, and robot/human interaction will grow in importance.

Sign up for the Design News Daily newsletter.

.jpg?width=100&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)