Robotics

strong grippers

Automation

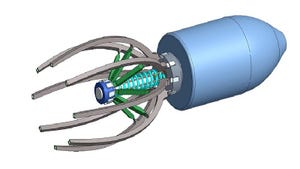

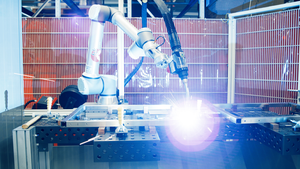

OnRobot Debuts Two Electrical Grippers for High-Payload ApplicationsOnRobot Debuts Two Electrical Grippers for High-Payload Applications

OnRobot’s new electrical grippers are launching along with the new machine tending solution – AutoPilot – powered by D:PLOY and developed in collaboration with Ellison Technologies.

Sign up for the Design News Daily newsletter.

.jpg?width=100&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)