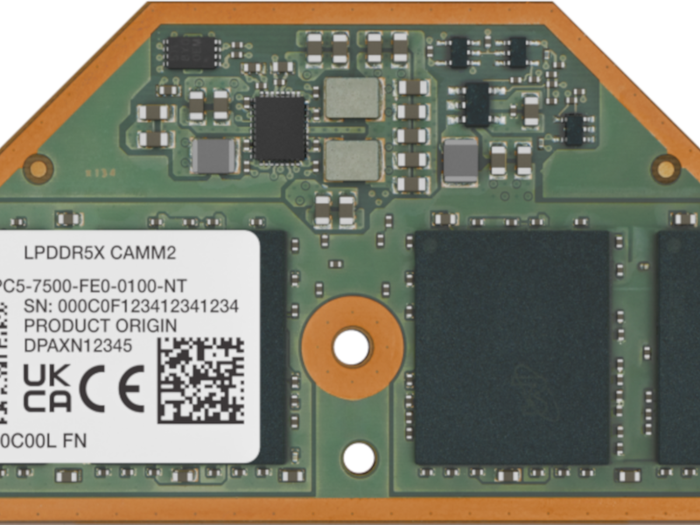

Apple's AI-ready iPad Pro.

Electronics

Apple Unveils New iPad Amidst Jarring Video PromotionApple Unveils New iPad Amidst Jarring Video Promotion

Latest tablet stands on its own merits, but Apple's product promotion video raises eyebrows.

Sign up for the Design News Daily newsletter.

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)