Artificial Intelligence

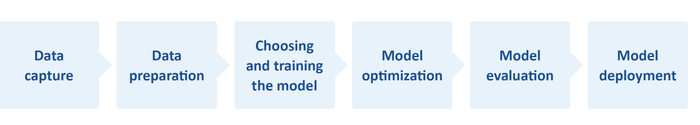

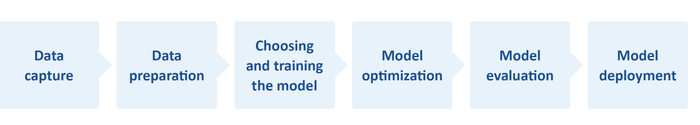

Understanding AI workflows are a vital part of created embedded edge applications.

Embedded Systems

How Can Embedded Engineers Implement Edge AI ApplicationsHow Can Embedded Engineers Implement Edge AI Applications

Following these useful tips can help you create edge AI applications without straining processor and storage resources.

Sign up for the Design News Daily newsletter.

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.gif?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)