Artificial Intelligence

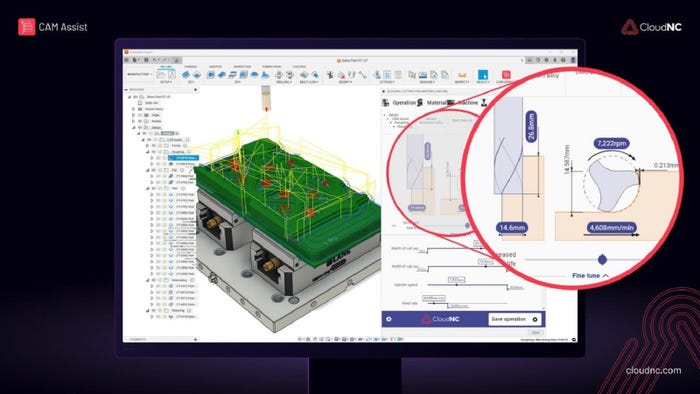

CNC machining

Industry

Catch These New AI Products and SystemsCatch These New AI Products and Systems

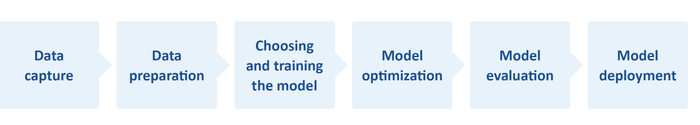

We’re looking at AI visual inspection, AI driven CNC machining, an AI analyzer, and more.

Sign up for the Design News Daily newsletter.

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.gif?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)