Will Generative AI Disrupt Our Lives For the Better?

This fledgling form of AI is a potential game changer for the way we work and interact, but many worry the tech’s effects will be negative.

March 30, 2023

Much of the tech world buzz recently has been the introduction of ChatGPT-4, the latest version of ChatGPT, the generative AI software that uses a conversational language model that can answer many questions quickly and potentiallly cut the time spent doing lots of tasks.

But ChatGPT has raised questions about the role of generative AI software that, so far, no one has definitive answers for. How accurate are the quick answers and information provided by generative software? Does the software threaten to radically alter, even replace, many jobs humans now perform? And, how ethical and free of bias is generative software at this point, given that those issues are challenges companies are trying to resolve with existing forms of AI?

The consensus so far seems to be that ChatGPT-4 and other forms of generative AI will likely transform the way we work and interact, though to what extent still remains to be seen. The outputs from generative AI tools will require skillful, careful human intervention to maximize the technology’s benefits.

Creating Something New

Generative AI can best be defined as AI algorithms that generate new outputs based on data they have been trained on. “Other forms of AI are used to automate processes, analyze data, and make predictions,” said Mark Beccue, an analyst covering AI for market intelligence firm Omdia, in an e-mail interview with Design News. But even that idea of creating something “new” can be debated, he noted.

“The idea that AI can create something new is a bit controversial, as that creating is seen as a unique human ability. How can a machine create something new if it is only trained on historical data? AI is not capable of non-linear thinkingꟷputting two disparate ideas together. Non-linear thinking is critical to creating new things and ideas.”

While most of the public has barely heard of generative AI until recently, the technology has been under development for over a decade, according to Bryan Catanzaro, Vice President, Applied Deep Learning Research, Nvidia, during the recent Nvidia GTC conference. “Generative AI has been researched for the past decade and a half.”

Catanzaro added that first version of ChatGPT, which its creator OpenAI introduced in 2018, could understand simple language commands. Subsequent versions added capabilities, to answer text questions and harness data from the Internet, with the latest version, GPT4, having better problem-solving abilities including being able to understand multi-turn interactions.

Educators worry that generative AI tools such as ChatGPT will enable students to ace tests without learning the material. An article on Mashable found that ChatGPT-4 scored in the 80 or 90 percent tile on the reading and math portions of the SAT, though it only score in the 54 percent tile on the writing portion, which should ease some educator’s concerns. The same article noted that Chat GPT-4 also aced several high school advanced placement exams, though it performed relatively poorly in the AP English literature exams.

Trusting Results

The mixed results that ChatGPT-4 achieved on these tests has given skeptics reasons to be wary of the technology. But even supporters warn that the technology’s results need to be examined.

“Reliability is the biggest obstacle,” conceded IIya Sutskever, Co-founder and Chief Scientist, OpenAI, during an online interview with Nvidia CEO Jensen Huang during the recent Nvidia GTC conference. “These networks do make mistakes, but with additional research we should be able to achieve higher reliability and more accurate guardrails,” with guardrails referring to limits set to better safeguard against inaccurate or biased results.”

Mikaela Pisani, a data scientist at Rootstrap, said, “You have to validate any information from Chat GPT with other sources. It (Chat GPT) can help us solve some problems faster, but it will not replace our thinking.”

Omdia’s Mark Beccue said AI adopters need to continue with a guarded approach. “We are advising clients to proceed with caution. Companies that want to experiment with GAI should do so but should make sure they have guardrails for responsible AI in place. A great question any company should ask themselves about AI is not, “Can we do this AI?” But rather, “Should we do this AI?”

Benefits for Product Design

With ChatGPT-4 bringing the potential of generative software into the public eye, both individuals and companies have been started to dabble with the technology to see its potential benefits. One of the is Monolith, a company that itself use generative AI in its data science tools to design products.

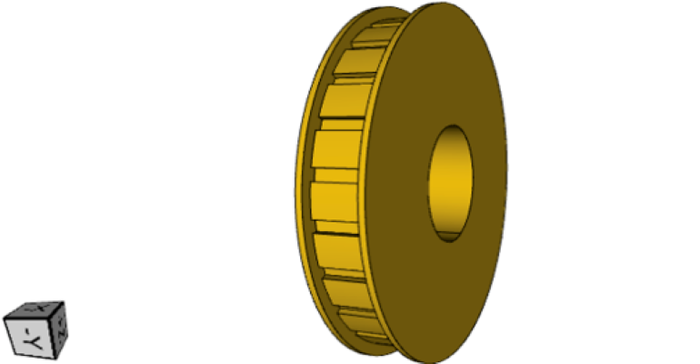

Richard Ahlfeld, founder and CEO of Monolith, said as an experiment, company engineers used ChatGPT to generate parametric scripts for the design of several industrial products, including a single-shaft gear, a stepped shaft, and finally, a turbine blade. “This is revolutionary, Ahlfeld told Design News in an interview. “Our company has spent months generating these parametric scripts.”

Echoing the thoughts of others, Ahlfeld said that the scripts do not generate working parts out of the box and must be checked and modified by the company’s own engineers. But to him, the potential benefits of ChatGPT can be substantial. “For us, it enables us to generate parametric code in far less time than previously, and in turn enables us to create more part designs.”

The Jobs Issue

The ability for generative AI to substantially reduce the time needed to perform tasks points to the technology’s most controversial aspect, namely the potential displacement of many jobs.

“There is a study I saw that Chat GPT could affect 19% of the workforce,” said Mary Elkodry, President and Founder of Elkordy Global Strategies, a public relations and digital marketing company. “I like AI as a tool that will be a resource to spur creativity, but not as a first line to start work.”

The study Elkordy refers to could very well be a recent joint study between OpenAI, the Chat GPT’s creaters, with the University of Pennsylvania and OpenResearch. According to reports, the study, a collaboration between humans and AI, generated startling results postulate that 80% of the U.S. workforce could have at least 10% of their work tasks affected, with 19% of workers seeing half of their tasks influenced.

When the study spelled out details on the occupations potentially affected by GPT the results were even more surprising. While reporters and journalists and administrative assistants and secretaries were not that surprising, the AI identified others as being mathematicians, accountants and auditors, clinical data managers, climate change policy analysts, and blockchain engineers as having very high or complete exposure to GPTsꟷoccupations that tend to be higher paying and require higher levels of skill.

To be sure, generative AI is still in the exploratory change by many companies, with early adopters using the technology to augment humans doing existing tasks.

Jono Luk, Vice President of Product Management for Cisco Webex, said AI can provide positive benefits for call centers, which often have customers on hold for extended periods of time. “When you are a customer, you want your answers fast.” He believes humans will continue to play an important role in these call centers by being able to handle more challenging issues.

“We are nowhere near replacing humans. AI will be able to help us meet unmet need (for handling calls) in the aggregate.”

Omdia’s Mark Beccue echoes an opinion still shared by many. “People have been saying AI will eliminate jobs since the market gained momentum in 2016. As AI becomes increasingly operationalized, it will take on work that can and should be automated. AI will change work roles for humans away from work that requires primarily linear thinking to work that requires primarily non-linear thinking–work that requires emotional intelligence, etc.”

Ethics and IP issues

As with other forms of AI, the issues of ethics and responsibility are emerging as generative AI becomes a larger part of everyday life.

For larger companies, this often means setting up guidelines and regulations on the proper use of AI. “We have to adhere to core principles,” said Webex’s Jono Luk, “We are updating and having those conversations now. We have to realize there is a customer using our product.”

“The biggest reason generative AI is more of an issue is because most users are expecting magic and don’t necessarily see themselves as having to employ responsible AI practices, they just assume the outputs are good,” said Omdia’s Mark Beccue. It puts responsibility on those who implement AI, any kind of AI, to use it responsibly.”

The IP rights for generative AI will likely become a greater issue as the technology gains in usage. Monolith’s Richard Ahlfeld wonders if the ChatGPT-4 algorithms the company used to generate parametric scripts for its experimental industrial parts would be protected at some point by IP rights.

“We don’t know if ChatGPT would at some point check for commercial rights,” said Ahlfeld.

Spencer Chin is a Senior Editor for Design News covering the electronics beat. He has many years of experience covering developments in components, semiconductors, subsystems, power, and other facets of electronics from both a business/supply-chain and technology perspective. He can be reached at [email protected].

You May Also Like

.jpg?width=300&auto=webp&quality=80&disable=upscale)