Can AI Turn Around the Fortunes of Chip Suppliers?

Development of chips for AI processors and high-end supercomputers may be the ticket for semi companies hammered by the PC market.

The first quarter of 2023 has so far played out as many analysts expected, with suppliers that play heavily in the PC, server, and commodity memory market performing poorly while companies with strong product lines in areas such as automotive and industrial doing better, in a few cases even performing above projected guidance.

Most electronics companies are forecasting flat or modest gains for the second quarter, with the hope of a long-awaited uptick coming in the second half of the year. Some of this will be fueled by improving conditions in PCs and servers. But in the long run, it may be the market where there is currently a lot of buzz―AI―that will be more crucial to the long-term health of many semiconductor and electronics companies.

In particular, the rapid emergence of generative AI, much of it spearheaded by tools such as ChatGPT―is prompting a flurry of moves by some of the largest semiconductor companies.

Strategic Collaboration

Just last week, Intel announced a strategic collaboration with Boston Consulting Group to enable generative AI solutions using end-to-end Intel AI software and hardware, for enterprise clients. Boston Computing Group is leveraging Intel’s AI supercomputer powered by Intel Xeon scalable processors and AI-optimized Habana Gaudi hardware accelerators, as well as production-ready hybrid cloud-scale software.

Earlier this year, Intel has launched the 4th generation Xeon Scalable processors along with the Xeon CPU Max Series, and the Intel Data Center GPU Max Series, for applications involving AI, cloud, network, edge, and supercomputers. According to Intel, the 4th Gen Intel Xeon offer a 2.9 X average performance per watt efficiency improvement for targeted workloads when utilizing built-in accelerators, up to 70-W power savings per CPU in optimized power mode with minimal performance loss, and a 52% to 66% lower total cost of ownership

Intel’s rival AMD has been establishing a presence in the high-end supercomputing market through products such as its EPYC processors and AMD Instinct accelerators. However, AMD has so far appeared less active in the AI market.

The company is also reportedly seeking to expand its presence in AI by developing AI-capable chips, code-named Athena. However, neither company has confirmed the rumor.

Playing Catch Up

Both Intel and AMD have their work cut out for them, however, as rival Nvidia currently holds a dominant position in processors for the AI market and seems intent on not relinquishing any of its market-leading share.

During its GTC event in Marche, Nvidia unleased a slew of AI-related announcements. The company launched interference platforms for large language models and generative AI workloads, designed for video, image generation, large language model deployment, and graph recommendation models.

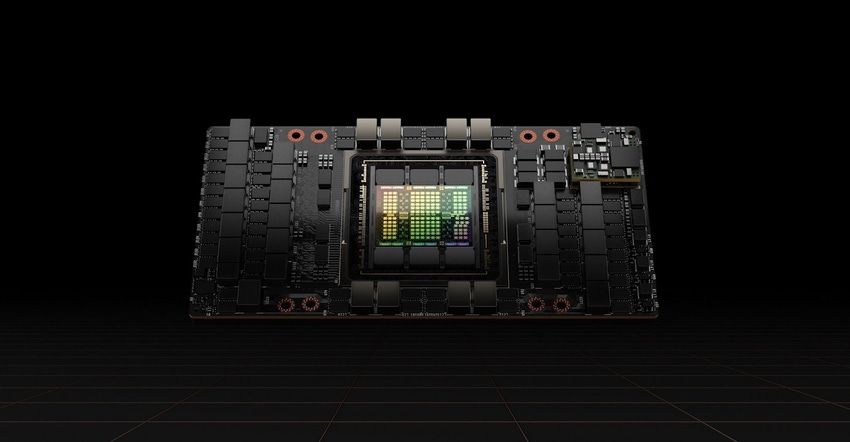

Nvidia also announced that its H100 Tensor Core GPU, for AI applications, has been adopted by several AI companies including OpenAI, the creators of ChatGPT. On top of that, Nvidia announced its DGX H100 supercomputer based on the H100 GPU is now available for customers.

Spencer Chin is a Senior Editor for Design News covering the electronics beat. He has many years of experience covering developments in components, semiconductors, subsystems, vidperspective. He can be reached at [email protected].

About the Author(s)

You May Also Like