Streaming Video Versus Machine Vision: How Do They Compare?

May 1, 2015

Machine vision and video streaming systems are used for a variety of purposes, and each has applications for which it is best suited. This denotes that there are differences between them, and these differences can be categorized as the type of lenses used, the resolution of imaging elements, and the underlying software used to interpret the data.

Defining Machine Vision and Video Streaming

Essentially, video streaming captures continuous video streams for viewing by humans, whereas machine vision products capture snapshots for viewing and analysis by computer software.

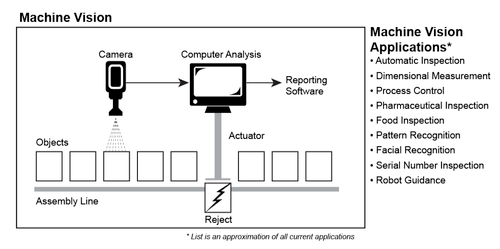

Machine vision systems capture discrete snapshots of products to analyze for abnormalities. For example, a machine vision system might analyze the snapshot to ensure the product is the correct size, color, orientation, is free of cosmetic defects, and has no foreign objects. Most of this analysis can be conducted with just one snapshot. Machine vision is used most frequently for automatic inspection, process control, counting objects, and measuring dimensions (Figure 1).

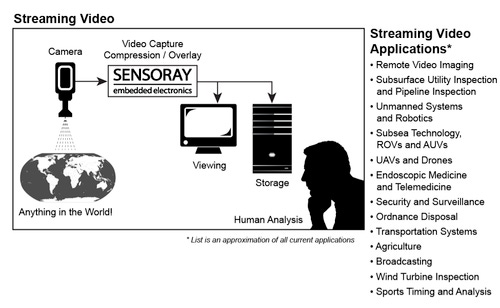

By contrast, video streaming systems capture an unbroken sequence of snapshots to make a continuous movie stream. Besides being used for entertainment applications, video streaming is also widely used in industrial and business applications, including video conferencing, robot guidance, security systems, as well as remote-operated vehicles, e.g., unmanned aerial vehicle (UAVs) (Figure 2).

Unlike machine vision, with video streaming each new frame has a time relationship to other frames in the stream. Unrelated movie scenes from several cameras are often captured simultaneously using multiple cameras, e.g., traffic monitoring, building security, and medical operations.

Latency

Movie streams have to be captured quickly and displayed with minimal delay (latency). For example, the captured stream of a robotic arm movement must be displayed without significant latency to prevent it from causing damage or missing its target. Any delay of more than 0.1 second is considered significant. Surgical procedures using endoscopes and video electronics also need low-latency video.

Some machine vision applications require low latency but not as low as streaming video. Machine vision applications must quickly analyze each snapshot and move a mechanical actuator at the speed of a manufacturing process.

Data Compression

Video streaming systems perform data compression on the movie stream so it can be transmitted over available bandwidth of data links -- like the Internet, radio links, and data cables (Ethernet). A movie stream can often be compressed by a factor of 100, which saves a significant amount of data storage. For example, this same level of data compression is used to fit a movie on a DVD.

Unlike machine vision, streaming video must combine and synchronize audio channels to video streams to maintain lip synchronization. This is not an easy task for lengthy movie streams, but it is quite important. Consider how annoying it is to view a speaker or singer with unsynchronized audio.

MORE FROM DESIGN NEWS: Embedded Vision Is Guiding the Path of Smart Industrial Machinery

Some video streaming products, including many of Sensoray's, can restore a compressed stream to its uncompressed state for immediate viewing with little delay -- so the camera data can be viewed almost instantly.

By contrast, machine vision systems do not want compressed data for their inspection function. If the data were compressed, time would be spent decompressing it before passing it to analysis software. In fact, many machine vision systems use uncompressed monochrome (black and white) images to simplify their software analysis. Also, unlike video streaming that is putting the data into a format that can be transmitted over limited bandwidth data lines, machine vision systems typically have available very wide bandwidth transmission lines.

About the Author(s)

You May Also Like