New in IIoT: Fog Computing Leverages Edge Devices and the Cloud

A new approach to IoT data collection and analysis uses an independent set of smart edge nodes to process data locally and selectively store subsets of data in the cloud.

February 17, 2016

Fog computing is a new technology that is basically a merger between conventional networking and cloud networking. Its biggest uses are at the edges of networks: sensors and meters in homes, and industrial pumps and pipelines. An oil platform, for example, produces about 4 TB of data per day, but it doesn’t really make sense to take the binary data that pretty much states “I’m on” until the one moment it says “I’m broken” or “I’m not working” into the cloud.

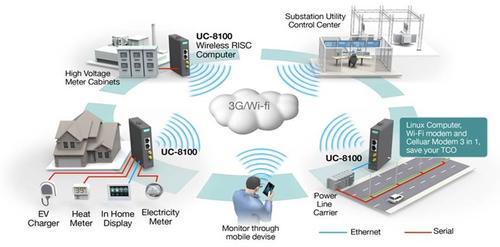

“It’s an architecture that’s used by end-user clients and puts a lot of the bandwidth processing at near-edge devices,” Thomas Nuth, global vertical manager for Moxa Inc., told Design News during a recent interview. “Examples would be switches or wireless devices that have embedded computers in them, to store and compute at the edge of the network. Then, the data can be pushed to central communication like a control center if necessary or desired. Edge or fog computing is essentially where edge meets cloud.”

Applications where fog computing is making inroads are metering or smart grid applications for localized markets or microgrids, where there are tons of data being processed at every moment and fed to a node or a group of nodes at that location. It could be billing or metering data computed to show, for example, flow variations or the amount of time a device is on or off. Downtime and usage data might be processed or forecasted, and alerts can be processed at the location to selectively push important data into the cloud.

The idea behind fog computing is relatively inexpensive smart edge devices running analytics on the factory floor in real time and then, on a scheduled basis such as every 30 minutes or an hour, pushing selected data to the cloud.

(Source: Moxa)

“The majority of innovation is tools for users to process and store data, and how do we make this work from a control perspective. A lot of companies and especially DCS vendors have come up with a concept they are calling cloud SCADA,” Nuth said. “While cloud isn’t necessarily worthwhile for the industrial space, what it does make sense for is control and monitoring of devices. Typically with SCADA, it has to be customized and wrapped around each application that it’s controlling.”

Nuth said the advantage of cloud SCADA is that, rather than buying, implementing, and commissioning it -- which requires having a huge management team to set it up and configure it -- users can drop in a cloud SCADA control system that can be customized using drag-and-drop tools for the application that is being supported. An operating plant implementing such a system is basically hiring one or two engineers to do the work, as opposed to requiring a DCS vendor, a system integrator, and a whole host of people at the company to support it.

READ MORE ARTICLES ON THE IIOT:

The idea behind fog computing is to determine subsets of data that would be most useful to store in the cloud. It often depends on the devices, such as meters, and a lot of the solution is plug-and-play. If an operator has a group of oil wells with conventional data that has always been processed and readable such as flow, pressure, and production rate, for example, Nuth said that data is inherently going through the network, and through the embedded computer for processing, as well, and then stored. At that point, selections can be made not only on what data to store, but also how often the data should be read.

The data is not being pushed at every second or millisecond; it’s being pushed every hour or every five minutes unless there’s an alert with a need for an immediate push. That’s where flexibility is beneficial; if a measurement reaches a temperature threshold or pressure potential, someone needs to know about it and institute automatic corrective action immediately. It then starts phasing into the much-talked-about real-time computing philosophy.

Technology goals for automation suppliers include providing as much bandwidth as possible, along with open protocol configuration. Solutions need to be suitable for gateway conversions and typically a gateway that can convert fieldbuses to Ethernet.

You May Also Like

.jpg?width=300&auto=webp&quality=80&disable=upscale)