An Introduction to Chain of Trust in Embedded Applications

For embedded systems utilizing microcontrollers, real-time operating systems, or bare-metal systems, consider this approach to security.

September 13, 2022

Designing an embedded system with security in mind has become necessary for many industries. The drive to connect a device to the internet allows remote attacks on the system. Developers who want to build a secure embedded system must ensure that their devices implement a chain of trust. In this post, we will explore what a chain of trust is and the critical elements in a chain of trust for embedded systems.

What is a Chain of Trust?

A chain of trust is a sequence of authentication and integrity checks that ensures only approved software runs on the system—for an embedded system, developing a chain of trust requires that developers boot their system in stages. Each stage uses cryptographic keys, certificates, and hashes to verify that the following software components loaded have not been modified (integrity) and that they come from the developers (authentication).

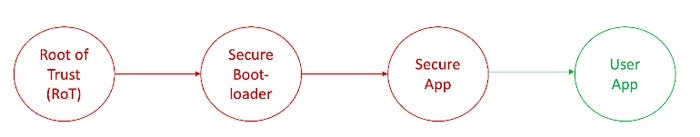

Embedded software developers working on application processors that run Linux have had a multistage boot process for years. However, developers working with microcontrollers, real-time operating systems, or bare-metal systems might find this quite different initially. In general, developing a chain of trust looks something like the figure below:

The Root of Trust (RoT)

Every system must have an anchor that forms the foundation for trust. That anchor is then used to validate every piece of software loaded on the system. A Root of Trust is an immutable process or identity used as the first entity in a trust chain. A good Root of Trust leverages hardware to build the trust anchor. For example, cryptographic PUFs are software algorithms that generate secure key pairs from a microcontroller's RAM.

In general, a Root of Trust is the collection of keys and certificates that validates the software running on a system is intact and authenticated. A Root of Trust will also usually provide the system with secure services such as secure storage, attestation, and application partitions.

When the system first boots, it establishes the Root of Trust. Next, the Root of Trust will authenticate and verify the integrity of the secure bootloader. First, the bootloader has a digital signature that the company creates. Next, the application signature(s) is verified using a hash calculated on the bootloader. Successful verification demonstrates that the bootloader was placed there by the company unmodified.

Secure Bootloaders

Secure bootloaders serve several purposes. First, they are used to authenticate and verify the integrity of secure and user applications that load after it. If an authentication or integrity failure occurs, the bootloader will not load those applications. The device will not operate normally but will remain in a safe state within the secure bootloader. Regularly, the secure and user applications will be validated and loaded into memory to perform their primary function.

Second, bootloaders are also used to update the secure and user application code. When software is updated, the bootloader retrieves the new version, stores it, and then authenticates and validates it. For example, the bootloader will perform a firmware update if the new application is from the company and valid.

Secure Applications (Secure Code Domain)

Secure applications are code that runs on the system in a secure execution environment. For example, an application might have cryptographic algorithms that run to encrypt data. A developer would not want those algorithms to be exposed to any old function within the application. So instead, there is a secure execution environment that is hardware isolated from other user application code. Hardware isolation is a critical design element in designing secure embedded systems.

There are several ways to create hardware-isolated execution environments. First, developers can use multicore microcontrollers. One core is used for the secure execution environment, while the other core is the user application. Next, developers can use single-core microcontrollers that use technologies like Arm’s Trustzone. Finally, developers can leverage memory protection units (MPUs) to provide different isolation levels within their applications.

User Applications (Non-Secure Code Domain)

The user application region is the last link in most Chain-of-Trust implementations for embedded devices. The user application is often validated and authenticated during boot but is unsecure. The user application is the code that usually interacts with the outside world and follows the commonly used software models developers use. If a security incident occurs, it is expected to happen in the user application code. While one might immediately think this is unacceptable, it’s essential to understand what exactly is in the user code.

User data, keys, and anything that needs to be secured would not be placed in the user application area. The user application is a hardware-isolated execution environment separated from the secure execution environment. If someone manages to inject code or gain access to this region, they won’t be able to access any of the device’s secrets.

Conclusions

Security has become essential to a wide variety of embedded systems. In most cases, companies can no longer ignore the need for security in their devices. While security can seem daunting at first, there are a wide variety of tools and examples available that developers can leverage to secure their embedded systems. A key component to each secure system is establishing a chain of trust that leverages a root of trust, a secure bootloader, and breaks the application into secure and non-secure execution spaces.

About the Author(s)

You May Also Like