4 Reasons to Use Artificial Intelligence in Your Next Embedded Design

Beyond the buzz of artificial intelligence, there are compelling reasons to include AI tools in your engineering projects.

November 14, 2019

For many, just mentioning artificial intelligence brings up mental images of sentient robots at war with mankind and man’s struggle to avoid the endangered species list. While this may one day be a real scenario for when (perhaps a big if?) mankind ever creates an artificial general intelligence (AGI), the more pressing matter is whether embedded software developers should be embracing or fearing the use of artificial intelligence in their systems. Here are five reasons why you may want to include machine learning in your next project.

Reason #1 – Marketing Buzz

From an engineering perspective, including a technology or methodology in a design simply because it has marketing buzz is something that every engineer should fight. The fact though is that if there is a buzz around something, odds are it will in the end help to sell the product better. Technology marketing seems to come in cycles, but there are always underlying themes that are driving those cycles that at the end of the day do turn out to be real.

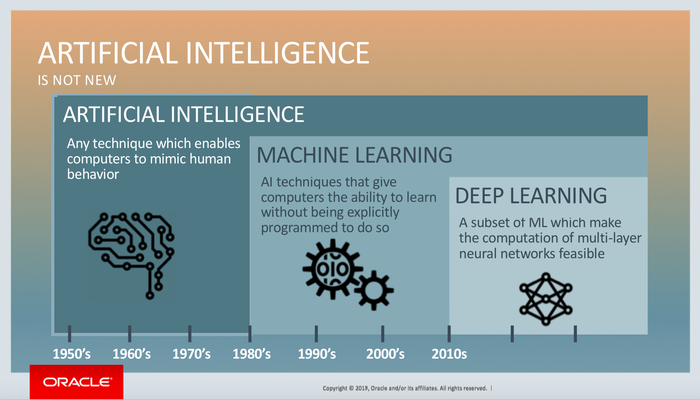

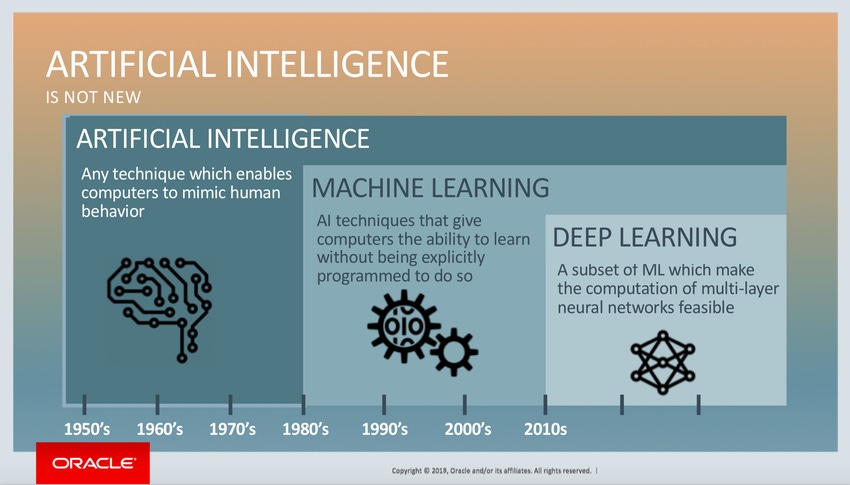

|

Artificial intelligence has progressed through the years, with deep learning on the way. (Image source: Oracle) |

Machine learning has a ton of buzz around it right now. I’m finding this year that had industry events, machine learning typically makes up at least 25% of the event talks. I’ve had several clients tell me that they need machine learning in their product and when I ask them their use case and why they need it, the answer is just that they need it. I’ve heard this same story from dozens of colleagues, but the push for machine learning seems relentless right now. The driver is not necessarily engineering, but simply leveraging industry buzz to sell product.

Reason #2 – The Hardware Can Support It

It’s truly amazing how much microcontroller and application processors have changed in just the last few years. Microcontrollers which I have always considered to be resource constrained devices are now supporting megabytes of flash and RAM, having on-board cache and reaching system clock rates of 1 GHz and beyond! These “little” controllers are now even supporting DSP instructions which means that they can efficiently execute inferences.

With the amount of computing power available on these processors, it may not require much additional cost on the BOM to be able to support machine learning. If there’s no added cost, and the marketing department is pushing for it, then leveraging machine learning might make sense simply because the hardware can support it!

Reason #3 – It May Simplify Development

Machine learning has risen on the “buzz” charts for a reason. It has become a nearly indispensable tool for the IoT and the cloud. Machine learning can dramatically simplify software development. For example, have you ever tried to code up an application that can recognize gestures, handwriting or classify objects? These are really simple problems for a human brain to solve, but extremely difficult to write a program for. In certain program domains such as voice recognition, image classification and predictive maintenance, machine learning can dramatically simplify the development process and speed-up development.

With an ever expanding IoT and more data than one could ever hope for, it’s becoming far easier to classify large datasets and then train a model to use that information to generate the desired outcome for the system. In the past, developers may have had configuration values or acceptable operation bars that were constantly checked during runtime. These often involved lots of testing and a fair amount guessing. Through machine learning this can all be avoided by providing the data, developing a model and then deploying the inference on an embedded systems.

Reason #4 – To Expand Your Solution Toolbox

One aspect of engineering that I absolutely love is that the tools and technologies that we use to solve problems and development products is always changing. Just look at how you developed an embedded one, three and five years ago! While some of your approaches have undoubtedly stayed constant, there should have been considerable improvements and additions to your processes that have improved your efficiency and the way that you solve problems.

Leveraging machine learning is yet another tool to add to the toolbox that in time, will prove to be an indispensable tool for developing embedded systems. However, that tool will never be sharpened if developers don’t start to learn about, evaluate and use that tool. While it may not make sense to deploy a machine learning solution for a product today or even next year, understanding how it applies to your product and customers, the advantages and disadvantages can help to ensure that when the technology is more mature, that it will be easier to leverage for product development.

RELATED ARTICLES:

Real Value Will Follow the Marketing Buzz

There are a lot of reasons to start using machine learning in your next design cycle. While I believe marketing buzz is one of the biggest driving forces for “tinyML” right now, I also believe that real applications are not far behind and that developers need to start experimenting today if they are going to be successful tomorrow. While machine learning for embedded holds great promise, there are several issues that I think should strike a little bit of fear into the cautious developer such as:

How to test and verify their models

Hackers attempts to foil or trick their model

How to secure their application code

These are concerns for a later time though, once we’ve mastered just getting our new tool to work the way that we expect it to.

Jacob Beningo is an embedded software consultant who currently works with clients in more than a dozen countries to dramatically transform their businesses by improving product quality, cost and time to market. He has published more than 200 articles on embedded software development techniques, is a sought-after speaker and technical trainer, and holds three degrees which include a Masters of Engineering from the University of Michigan. Feel free to contact him at [email protected], at his website, and sign-up for his monthly Embedded Bytes Newsletter.

DesignCon: By Engineers, For Engineers January 28-30: North America's largest chip, board, and systems event, DesignCon, returns to Silicon Valley for its 25th year! The premier educational conference and technology exhibition, this three-day event brings together the brightest minds across the high-speed communications and semiconductor industries, who are looking to engineer the technology of tomorrow. DesignCon is your rocket to the future. Ready to come aboard? Register to attend! |

About the Author(s)

You May Also Like