Will AI Learning Help Fast-Charging Batteries?

Industry experts debated where AI might be useful for fast-charging battery tech during the Battery Show & EV Tech Digital Days panel.

January 4, 2021

During the Battery Show & EV Tech Digital Days panel, researchers from MIT, Stanford, and the Toyota Research Institute (TRI) used artificial intelligence (AI) to predict the performance of lithium-ion batteries. The machine learning-based system had been trained using data generated from a test chamber where energy was discharged and charged as quickly as possible into 100s of batteries. The data was used to teach AI how to build a better battery.

Fast-charging batteries are considered a crucial element for the adoption of electric vehicles (EVs) so that the future recharging of EVs will replicate the convenience and speed of fill up a tank at a gas station. But will AI be enough to solve this problem? To answer that question, The Battery Show assembled a live, virtual panel of leading experts that included AK Srouji, CTO at Romeo Power Technology, Erik Stafl, President at Stafl Systems, and Jarvis Tou, Executive VP at Enevate Corporation. Design News senior editor, John Blyler, moderated the panel. What follows is a portion of the Q&A session that focused on AI and battery technologies.

Question: Can the panel speak on the implementation of AI algorithms to enable fast charging and low degradation?

Erik Stafl: Basically, your battery management system (BMS)should have an algorithm that continually updates the state estimation of the status of each cell group in the pack. You’ll want to think about what is the capacity of that cell group? What is the impedance of that cell group? What is the overall delta of that cell group's performance compared to the others? As you answer these questions, you’ll need to adjust the overall algorithm to try to maximize your charge rate while minimizing the degradation to each cell group.

You have to think about it as each cell group individually because sometimes you have a solid group that ages faster than the rest. Obviously, we always want an ideal battery that has thermal, capacity and impedance uniformity but in reality, that's not always achieved. So you have to make sure that you're not getting into any sort of corners where you have thermal run away and differential aging on those different cell groups.

The main point is that you're monitoring this on a per cell group level, and then you're updating all the different parameters and each charge event. These updates will enable the AI algorithm to actually learn and adjust as it goes, like a deep learning algorithm.

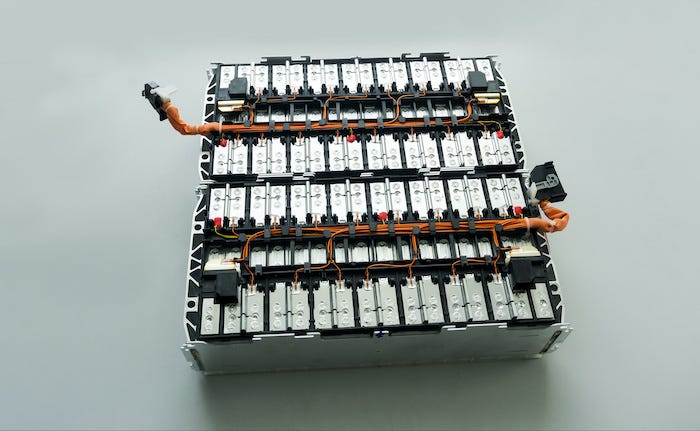

|

Electric vehicle lithium battery pack and wiring connections internally between cells. |

AK Srouji: From a material science point of view, people are working with AI to try to identify new chemical couples, be it electrolyte additives, or other compounds. That's AI intersecting material science. And the hope is that this accelerates the rate of discovery of new couples or new compounds or stable chemistry because classical chemistry takes a lot of time. Researcher ideas and AI insights coupled with high throughput experimentation will help accelerate the rate at which we're doing discovery in, say, lithium-ion batteries. The discovery of material improvements could lead to improving the charging rates. But we need to turn the algorithms in that direction.

Coming back to the battery management system, we have a machine learning team that’s focused on trying to solve the practical problem of battery aging. This condition we can show in the lab, things like functions of high voltage, low voltage, charging rates, temperature, et cetera. We can then translate this data to the module and to the pack and apply those formulas.

Machine learning and AI methods are needed to handle the really big and uncontrolled data sets that are gathered from the field, say, for all batteries used in a fleet of cars or trucks. Variables in the fleet condition would include the speed of different drivers at various times of day, the charging rates that occur throughout the day, etc.

Jarvis Tou: Both AK and Erik have been really focused on using AI in the BMS for real-time data from cars in the field.

The other area where AI helps is in R&D, for example, the use of physics-based modeling and deep machine learning on very, very large datasets. When you have a lot of channels of test equipment – we have about 2,500 of test channels that generate terabytes of data. AI can be used to mine that data, looking for the right patterns. It’s good for optimizations and to tweak things for minor changes, but I don’t think it’s mature enough yet to enable breakthrough discoveries. It is very good at taking data that you have and finding ways to optimize it, versus trying to find something where you don't have data.

|

The Battery Show Panel: (top left: John Blyler – Design News; top right: Jarvis Tou - Enevate Corp; bottom left: Erik Stafl - Stafl Systems; bottom right: AK Srouji - Power Technology) |

John Blyler is a Design News senior editor, covering the electronics and advanced manufacturing spaces. With a BS in Engineering Physics and an MS in Electrical Engineering, he has years of hardware-software-network systems experience as an editor and engineer within the advanced manufacturing, IoT and semiconductor industries. John has co-authored books related to system engineering and electronics for IEEE, Wiley, and Elsevier.

About the Author(s)

You May Also Like