The Battle of AI Processors Begins in 2018

2018 will be the start of what could be a longstanding battle between chipmakers to determine who creates the hardware that artificial intelligence lives on.

December 18, 2017

|

CPUs, GPUs, TPUs (tensor processing units), and even FPGAs. It's hard to tell who started the fight over artificial intelligence (AI), and it's too soon to tell who will finish it. But 2018 will be the start of what could be a longstanding battle between chipmakers to determine who creates the hardware that AI lives on.

When Intel made its latest AI hardware announcement at Automobility LA 2017 it wasn't a gauntlet being laid. Instead it was only the latest in a series one-upmanships on the part of a handful of major tech giants – all seeking to lay their claim in the AI hardware landscape. Because whomever rules AI will stand to become one of, if not the, dominate force in many industries including manufacturing, automotive, IoT, medical, and even entertainment.

On the hardware front, artificial intelligence is locked into its own personal Game of Thrones, with different houses all vying for supremacy and to create the chip architecture that will become the standard for AI technologies – particularly deep learning and neural networks.

Analysts at both Research and Markets and TechNavio have predicted the global AI chip market to grow at a compound annual growth rate of about 54% between 2017 and 2021.

Raghu Raj Singh, a lead analyst for embedded systems research at Technavio, said the need for high-power hardware that can handle the demands of deep learning are a key driver to this growth. “The high growth rate of hardware is due to the increasing need for hardware platforms with high computing power, which helps run algorithms for deep learning. The growing competition between startups and established players is leading the development of new AI products, both for hardware and software platforms that run deep learning programs and algorithms.”

Competition is heating up. AI is set to be the next big frontier for computing hardware and may be the most significant battleground for computer hardware since the emergence of mobile computing and the Internet.

So, how did we get here and who are the big players?

Good 'ol CPUs

When level 5 autonomous cars, those that require no human intervention to operate, hit the roads they will be among the smartest and most sophisticated machines ever created. Naturally self-driving vehicles have become one of the main targets for AI, and its where chipmaker Intel is looking to firmly stake its claim.

Rather than conducting all of its R&D in-house, Intel has used acquisitions to establish itself in the AI landscape. In August of 2016 Intel purchased Nervana Systems, a maker of neural network processors.

Neural networks are capable of performing a wide range of tasks very efficiently, but in order to do these tasks a network first has to be taught how to do them. A neural network to perform something as basic as recognizing pictures of dogs has to first be taught what a dog looks like, ideally across all breeds. This can mean exposing the network to thousands, if not millions, of images of dogs – a task that can be enormously time consuming without enough processing power.

In November of 2016, mere months after acquiring Nervana, Intel announced a new line of processors – the Nervana platform – targeted directly at AI-related applications such as training neural networks. “We expect the Intel Nervana platform to produce breakthrough performance and dramatic reductions in the time to train complex neural networks,” Diane Bryant, executive vice president and general manager of the Data Center Group at Intel said. “Before the end of the decade, Intel will deliver a 100-fold increase in performance that will turbocharge the pace of innovation in the emerging deep learning space.”

In March of this year the Intel made another high-profile AI acquisition in Mobileye, a developer of machine learning-based advanced driver assistance systems (ADAS), to the tune of about $15 billion. The significance of Intel's purchases made sense almost immediately. The chipmaker wanted to stake its claim in the autonomous vehicle space, and perhaps in doing so also establish itself as a key provider of machine learning-focused hardware.

In November at the Automobility LA trade show and conference in Los Angeles, Intel CEO Brian Krzanich called autonomous driving the biggest game changer of today as the company announced that its acquisition of Mobileye had yielded a new SoC, the EyeQ5, that boasted twice the deep learning performance efficiency of its closest competition – Nvidia's Xavier deep learning platform.

Tera Operations Per Second (TOPS) is a common performance metric used for high-performance SoCs. TOPS per watt extends that measurement to describe performance efficiency. The higher the TOPS per watt the better and more efficient a chip is. Deep Learning TOPS (DL) refers to the efficiency in performing deep learning-related operations. According to Intel's simulation-based testing the EyeQ5 is expected to deliver 2.4 DL TOPS per watt, more than double the efficiency of Nvidia's Xavier, which performs about 1 DL TOPS per watt.

Speaking with Design News, Doug Davis, senior vice president and general manager of Intel's Automated Driving Group (ADG), said that Intel chose to focus on DL TOPS per watt because it wanted to focus on processor efficiency over other metrics. “Focusing on DL Tops per Watt is really a good indicator of power consumption, but also if your thinking about it, it's also weight, cost, and cooling, so we really felt like efficiency was the important thing to focus on.” Davis said. “Think of electric vehicles [EVs], for example. With EVs it's all about range, but if my autonomous computing platform consumes too much power it reduces my range.”

Davis added, “There's always a lot of conversation around absolute performance, but when we looked at it we wanted to come at it from a more practical standpoint as we thought about different types of work loads. Deep learning is really key in being able to recognize objects and make decisions and do that as quickly and efficiently as possible.”

Nvidia has however disputed Intel's numbers, particularly given that the EyeQ5's estimates are based on simulations and the SoC won't be available for two years. In a statement to Design News, Danny Shapiro, Senior Director of Automotive at NVIDIA said: “We can't comment on a product that doesn't exist and won't until 2020. What we know today is that Xavier, which we announced last year and will be available starting in early 2018, delivers higher performance at 30 TOPS compared to EyeQ5's purely simulated prediction of 24 TOPS two years from now.”

Are GPU's Destined for AI?

Which brings us to GPUs. Call it a happy accident or serendipity. But GPU makers have found themselves holding the technology that could be at the forefront of the AI revolution. Once thought of as a complementary unit to CPUs (many CPUs have GPUs integrated into them to handle graphics processing), GPUs have expanded outside of their graphics- and video-centric niche and into the domain of deep learning, where GPU manufactures say they offer a far superior performance over CPUs.

|

Nvidia says its Titan V GPU is the most powerful PC GPU ever developed for deep learning. (Image source: Nvidia) |

While there are a handful of companies in the GPU market place, no company is more synonymous with the technology than Nvidia. According to a report by Jon Peddie Research Nvidia beat out both major competitors AMD and Intel with an overall 29.53% increase in GPU shipments in the third quarter of 2017. AMD's shipments increased by 7.63%, while Intel's increased by 5.01%. Naturally, this is mainly driven by the video gaming market, but analysts at Jon Peddie Research believe the demand for high-end performance in applications related to cryptocurrency mining also contributed to the shipments.

Demand for processors that can handle specific tasks that require high performance, things like cryptocurrency mining and AI applications, are exactly why GPUs are finding themselves at the forefront of the AI hardware conversation. GPUs contain hundreds of cores that can perform thousands of software threads simultaneously, all while being more power efficient than CPUs. Whereas CPUs are generalized and tend to jump around, performing a number of different tasks, GPUs excel at performing the same operation over and over again on huge batches of data. This key difference is what gives GPUs their namesake and makes them so adept at handling graphics – since graphics processing involves thousands of tiny calculations all happening at once. However, that same ability also makes GPUs ideal when you're talking about taking on tasks like the aforementioned neural network training.

Just this December Nvidia announced the Titan V, a PC-based GPU designed for deep learning. The new GPU is based on Nvidia's Volta architecture, which takes advantage of a new type of core technology that Nvidia calls Tensor Cores. In mathematical terms the dictionary defines a tensor as a “a mathematical object analogous to but more general than a vector, represented by an array of components that are functions of the coordinates of a space.” What Nvidia has done is develop cores with a complex architecture that is purpose-built for handling the demands of deep learning and neural network computing.

The Titan V contains 21 billion transistors and delivers 110 teraflops of deep learning performance. Nvidia is targeting the Titan V specifically at developers who work in AI and deep learning. Company founder and CEO Jensen Huang said in a press statement that the Titan V is the most powerful GPU ever developed for the PC. “Our vision for Volta was to push the outer limits of high performance computing and AI. We broke new ground with its new processor architecture, instructions, numerical formats, memory architecture and processor links. With Titan V, we are putting Volta into the hands of researchers and scientists all over the world.”

A World Made of Tensors

Perhaps no company is more invested in the concept of tensors than Google. In the last year the search giant has released an already-popular, open-source framework for deep learning development dubbed TensorFlow. As described by Google, “TensorFlow is an open-source software library for numerical computation using data flow graphs. Nodes in the graph represent mathematical operations, while the graph edges represent the multidimensional data arrays (tensors) communicated between them. The flexible architecture allows you to deploy computation to one or more CPUs or GPUs in a desktop, server, or mobile device with a single API.”

|

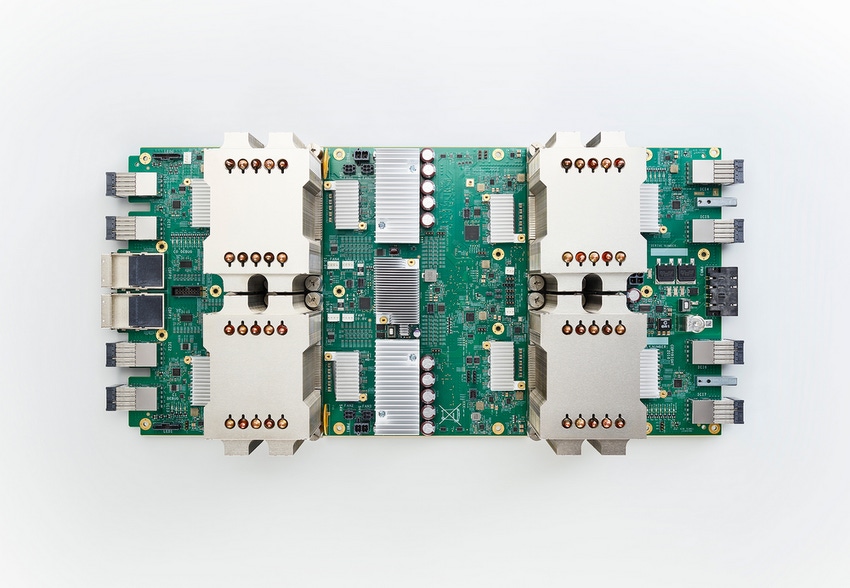

Google's tensor processing unit (TPU) runs all of the company's cloud-based deep learning apps and is at the heart of the AlphaGo AI. (Image source: Google) |

TensorFlow's library of machine learning applications, which includes facial recognition, computer vision, and, of course, search, among other applications, has proved so popular that in 2016 Intel committed to optimizing its processors to run TensorFlow. In 2017 Google also release a Lite version of TensorFlow for mobile and Android developers.

But Google isn't letting software be the end of its AI ambitions. In 2016 the company released the first generation of a new processor it calls the tensor processing unit (TPU). Google's TPU is an ASIC built specifically with machine learning in mind and is tailor made for running TensorFlow. The second-generation TPUs were announced in May of this year and, according to Google, are able to deliver up to 180 teraflops of performance.

In a study released in June 2017 as part of the 44th International Symposium on Computer Architecture (ISCA) in Toronto, Canada, Google compared its TPUs deployed in data centers to Intel Haswell CPUs and an Nvidia K80 GPUs deployed in the same data centers and found that the TPU performed, on average, 15 to 30 times faster than the GPUs and CPUs. The TPUs' TOPS per watt were also about 30 to 80 times higher. Google now says that TPUs are driving all of its online services such as Search, Street View, Google Photos, and Google Translate.

In a paper detailing its latest TPUs, Google engineers said the need for TPUs arose as far back as six years ago when Google found itself integrating deep learning into more and more of its products. “If we considered a scenario where people use Google voice search for just three minutes a day and we ran deep neural nets for our speech recognition system on the processing units we were using [at the time], we would have had to double the number of Google data centers!” the Google engineers wrote.

Google engineers said in designing the TPU they employed what they call “systolic design.” “The design is called systolic because the data flows through the chip in waves, reminiscent of the way that the heart pumps blood. The particular kind of systolic array in the [matrix multiplier unit] MXU is optimized for power and area efficiency in performing matrix multiplications, and is not well suited for general-purpose computation. It makes an engineering tradeoff: limiting registers, control and operational flexibility in exchange for efficiency and much higher operation density.”

TPUs have also proven themselves in some very high-end AI applications. TPUs are the brains behind Google's famous AlphaGo AI which defeated world champion Go players last year. Recently, AlphaGo took a massive leap forward by demonstrating that it can teach itself to a level of mastery in a comparatively short time. With only months of training, the latest version of AlphaGo, AlphaGo Zero, was able to teach itself to a level of competency that far surpassed that of human experts. It did the same for chess (a complex game, but exponentially less so than Go) in only a matter of hours.

FPGAs – The Dark Horse in the AI Race

So, that's it, then: TPUs are the future of AI, right? Not so fast. While Nvidia, Google, and, to some degree Intel, are all focused on delivering AI at the edge – having AI processing happen on-board a device as opposed to the cloud – Microsoft claims its data centers can deliver high-performance, cloud-based AI comparable to, and possibly exceeding, any edge-based AI using an unexpected source – FPGAs. Codenamed Project Brainwave, Microsoft believes a FPGA-based solution will be superior to any offered by a CPU, GPU, or TPU in terms of scalability and flexibility.

|

Microsoft's Project Brainwave performed at 39.5 teraflops with less than one millisecond of latency when run on Intel Stratix 10 FPGAs. (Image source: Microsoft / Intel). |

Whereas processor-based solutions are, in some way, restricted to specific tasks by virtue of their design, FPGAs are proposed to offer easier upgrades and improved performance over other options because of their flexibility and re-programmability. According to Microsoft, when run on Intel Stratix 10 FPGAs Microsoft's Project Brainwave performed at 39.5 teraflops with less than one millisecond of latency.

Whether FPGAs offer the best option for AI is as debatable as any other. Microsoft cites the high production costs of creating AI-specific ASICs as being too prohibitive, while others will say FPGAs will never fully achieve the performance of chip designed specifically for AI.

In a paper presented in March at the International Symposium on Field Programmable Gate Arrays (ISFPGA) a group of researchers from Intel's Accelerator Architecture Lab evaluated two generations of Intel FPGAs (the Arria10 and Stratix 10) against the Nvidia Titan X Pascal (the predecessor to the Titan V) in handling deep neural network (DNN) algorithms. According to the Intel researchers, “Our results show that Stratix 10 FPGA is 10%, 50%, and 5.4x better in performance (TOP/sec) than Titan X Pascal GPU on [matrix multiply] (GEMM) operations for pruned, Int6, and binarized DNNs, respectively. ... On Ternary-ResNet, the Stratix 10 FPGA can deliver 60% better performance over Titan X Pascal GPU, while being 2.3x better in performance/watt. Our results indicate that FPGAs may become the platform of choice for accelerating next-generation DNNs.”

Who Wears the Crown?

At this particular point in time its hard not to argue that GPUs are king when it comes to AI chips in terms of overall performance. That doesn't mean, however, that companies like Nvidia and AMD should rest on their laurels, confident that they hold the best solution. Competitors like Microsoft have a vested interest in maintaining their own status quo (Microsoft's data centers are FPGA-based) and turning AI consumers to their point of view.

What's more the company that comes out on top may not be the one with the best hardware so much as the one whose hardware ends up inside of the best application. While autonomous cars are looking to be the killer app that breaks AI into the wider public consciousness, it's too early to be definite. It could be an advancement in robotics, manufacturing, or even entertainment that really pushes AI through. And that's not to discount emerging applications that haven't even been reported or developed yet.

When the smoke clears it may not be one company or even one processor that dominates the AI landscape. We could see a future that shifts away from a one-size-fits-all approach to AI hardware and see a more splintered market where the hardware varies by application. Time will tell, but all of our devices will be a lot smarter once we get there.

REGISTER FOR PACIFIC DESIGN & MANUFACTURING 2018 Pacific Design & Manufacturing, North America’s premier conference that connects you with thousands of professionals across the advanced design & manufacturing spectrum, is back at the Anaheim Convention Center February 6-8, 2018! Over three days, uncover software innovation, hardware breakthroughs, fresh IoT trends, product demos, and more that will change how you spend time and money on your next project. CLICK HERE TO REGISTER TODAY! |

Chris Wiltz is a senior editor at Design News covering emerging technologies including VR/AR, AI, and robotics.

About the Author(s)

You May Also Like