3D sensing has expanded from smartphones to smart manufacturing, smart cars, smart medicine, and more.

August 15, 2022

3D imaging technologies have seen a boost in the consumer marketplace since being integrated into the iPhone’s back camera. When iPhone sales soared in 2021, the total mobile and consumer 3D camera revenue went up to about $3.6 billion or 326 million units, according to a market report by Yole Group. However, 3D sensing in mobile cameras still has limited applications, with only a few augmented reality (AR) games available and infrequent utilization in other applications. Potential future applications for 3D sensing in the mobile sector do include AR and the “metaverse,” which requires developments in the technology for its virtualized worlds and human interactions with them.

Yet, there are perhaps more interesting potential applications for 3D sensing outside consumer virtual realities. In industrial and automotive settings, 3D cameras can be vital in performing delicate and complex work in manufacturing, logistics, medicine, and public security. Companies like ams OSRAM, an industry leader in 3D sensing tech, assert that 3D sensing is vital for Industry 5.0, in which machines and humans directly interact to create sustainable and resilient supply chains. Consequently, such sensing is positioned to play a huge role in smart technologies, robotics, factory automation, automatic optical inspection, and more. To date, 3D sensing and scanning have been among the lesser-talked-about technologies used in designing, inspection, and quality control, but integrating the tech can come with a number of benefits across applications, including reverse engineering and analysis.

Sensor Fusion

With sensor capabilities expanding in manufacturing and rapidly increasing data generation, sensor fusion becomes possible and vital. Visualizing manufacturing processes is crucial to form insights and prevent potential vulnerabilities. Sensor fusion overcomes the limitations of systems of individual sensors—cameras, light sensors, LIDAR, etc.—by fusing data from multiple sources and sensor types to produce reliable information. This architecture enables users to approach processes from either a macro or a micro level, as manufacturers can model supply chains and collect information as well as model the components of microprocessors, cameras, and LIDAR systems.

One of the benefits of fusing data from a number of sources is 3D machine vision, which can be achieved in real-time. Broadly, machine vision encompasses all industrial and non-industrial applications in which a combined effort of hardware and software provides operational guidance based on the capture and processing of images. Such industrial vision systems, though they use algorithms and approaches similar to academic and military computer vision applications, demand greater robustness, reliability, and stability—attributes improved by effective 3D vision systems. These systems can perform objective measurements, such as those needed when guiding a robot to align parts or validate fill levels of bottles.

This sensor-fusion concept is currently being developed as a collaboration between Amentum, a partner of the SecureAmerica Institute at Texas A&M, and Unity, a gaming company also known for bringing immersive 3D AR and VR experiences from product data and businesses. The Unity team is working to build a visualization system to model the sensor-fusion entities and use the information to create real-time information, which they believe will be a key part of ensuring more resilient supply chain manufacturing processes. Ultimately, the hope is to build a sensor-fusion graphic library that will enable a collaborative ecosystem in which industry players are able to share data and technology, using it to model scenarios and iterate simulations to detect potential problems.

3D Scanning

A part of these potential 3D-sensing ecosystems will involve 3D-scanning technologies, which recreate information about physical components in a simulated world with exact dimensions. This can be done using laser scanners, light scanners, coordinated-measuring machines (CMMs), and computed tomography (CT) scanners. Generally, these scanners gather raw data and convert them into user-friendly formats like CAD models. Their precision is in millimeters, making it possible to monitor consistency in objects as well as recreate objects digitally for analysis. Complete product forms can be checked against the initial template, and inconsistencies are highlighted with incredible precision.

A report published in 2021 by Science Direct highlights the potential of 3D scanning throughout the industrial sphere, which includes reverse engineering, customizing digital replicas from existing component designs for quality assurance and dimension analysis, and monitoring work processes to ensure worker safety. Companies that were previously focused on the mobile consumer space have turned their attention to industrial applications, like ams OSRAM’s move toward investigating industry automation, autonomous robots, drone implementation, and more, demonstrated in a video tutorial.

Infineon, a manufacturer of semiconductor technologies, has also made moves into the space. In May 2022, it announced a partnership with pmdtechnologies to develop 3D depth-sensing technology for Magic Leap 2. This move is based on the belief that AR applications are positioned to fundamentally change the ways we live and work and will be vital to developing new industrial and medical applications. The Magic Leap 2 AR headsets are designed to enable operators to work more efficiently and optimize complex processes as well as improve collaboration among staff. In short, the headset captures the user’s full physical environment, while the new time-of-flight imager—with a 3D image sensor—helps the device understand and interact with its surroundings. The 3D imager allows the headset to detect objects precisely to the millimeter, improving safety and efficiency.

3D Imaging in Medical and Beyond

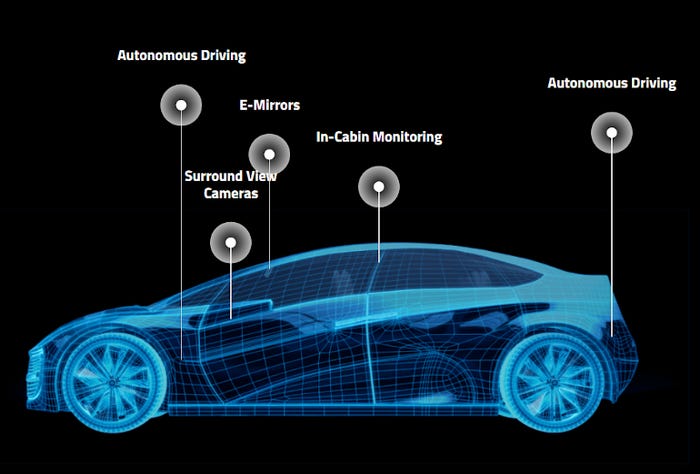

3D imaging devices also have numerous medical and automotive applications. 3D sensing, modeling, and printing support precision in production of prosthetics and other devices as well as in providing surgeons precise direct and indirect views for understanding a patient’s anatomy. A partnership between OMNIVISION and Lighthouse Imaging created the first commercial platform for 3D endoscopic devices in 2019, providing depth information to surgeons and reducing the risks of endoscopic surgeries. Since then, applications have continued to expand to include patient monitoring during a scan, collecting data for image-guided surgery, and mapping for reconstructive cosmetic surgery. In the automotive industry, 3D sensing is in development for everything from autonomous driving to in-cabin monitoring and e-mirrors.

The various kinds of 3D sensing technologies are not without their drawbacks, even though systems with combined methods may alleviate some. Time of Flight and structured light sensors are subject to random noise, while laser sensing and stereo vision provide limited focus.

Trends in 3D sensing appear to be moving toward collaborative systems that involve human operators, robotics, and a variety of sensing and data analysis tools to create robust and accurate datasets that are easily acted upon in a variety of settings, from planning stages to operating rooms and factory floors. The concept of the metaverse may have stimulated a new understanding of 3D sensing in the market, but it has great potential for integration into how we imagine manufacturing.

About the Author(s)

You May Also Like