Business viability will depend on quantum error correction, quantum volume, and qubit-sensitive algorithms. What does it all mean?

August 10, 2021

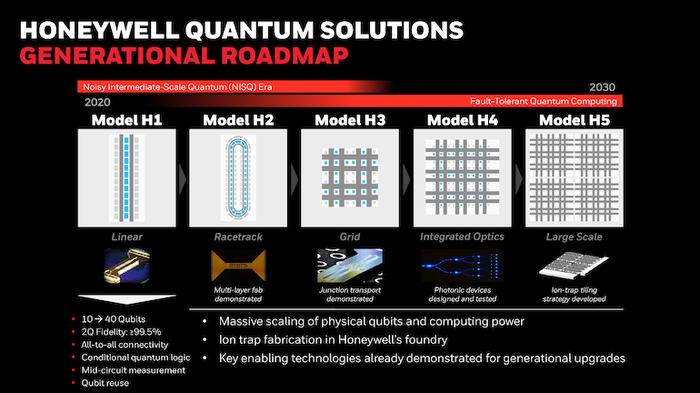

Recent scientific and technological breakthroughs in quantum computing hardware and software demonstrate the commercial viability of quantum computers. Specifically, Honeywell and Cambridge Quantum just announced three scientific and technical milestones that significantly move large-scale quantum computing into the commercial world

These milestones include demonstrated real-time quantum error correction (QEC), doubling the quantum volume of Honeywell’s System H1 to 1,024, and developing a new quantum algorithm that uses fewer qubits to solve optimization problems. Let’s break each of these topical areas down into understandable “bits” of information.

Quantum Error Correction

Real-time quantum error correction (QEC) is used in quantum computing to protect the information from errors due to decoherence and other quantum noise. Quantum decoherence is the loss of coherence. Decoherence can be viewed as the loss of information from a system into the environment. Quantum coherence is needed to perform computing on quantum information encoded in quantum states.

In contrast, classical error correction employs redundancy. The simplest way to achieve redundancy is to store the information multiple times in memory and then constantly compare the information to determine if corruption has occurred.

Another difference between classical and quantum error correction is one of continuity. In classic error correction, the bit is either a “1” or a “0,” i.e., it is either flipped on or off. However, errors are continuous in the quantum state. Continuous errors can occur on a qubit, in which a qubit is partially flipped, or the phase is partially changed.

Honeywell researchers have addressed quantum error correction by creating a single logical qubit from seven of the ten physical qubits available on the H1 Model and then applying multiple rounds of QEC. Protected from the main types of errors that occur in a quantum computer, the logical qubit combats errors that accumulate during computations.

Quantum Volume

Quantum Volume (QV) is the other key metric used to gauge quantum computing performance. QV is a single number meant to encapsulate the performance of quantum computers, like a classical computer's transistor count in Moore’s Law.

QV is a hardware-agnostic metric that IBM initially used to measure the performance of its quantum computers. This metric was needed since a classical computer’s transistor count and a quantum computer’s quantum bit count isn’t the same. Qubits decohere, forgetting their assigned quantum information in less than a millisecond. For quantum computers to be commercially viable and useful, they must have a few low-error, highly connected, and scalable qubits to ensure a fault-tolerant and reliable system. That is why QV now serves as a benchmark for the progress being made by quantum computers to solve real-world problems.

According to Honeywell’s recent release, the System Model H1 has become the first to achieve a demonstrated quantum volume of 1024. This QV represents a doubling of its record from just four months ago.

The third milestone comes from Cambridge Quantum Computing – recently merged with Honeywell - also has developed a new quantum algorithm that uses fewer qubits to solve optimization problems.

John Blyler is a Design News senior editor, covering the electronics and advanced manufacturing spaces. With a BS in Engineering Physics and an MS in Electrical Engineering, he has years of hardware-software-network systems experience as an editor and engineer within the advanced manufacturing, IoT and semiconductor industries. John has co-authored books related to system engineering and electronics for IEEE, Wiley, and Elsevier.

About the Author(s)

You May Also Like