Recent speakers at the European Test Conference (ETC) tell all about testing.

June 2, 2021

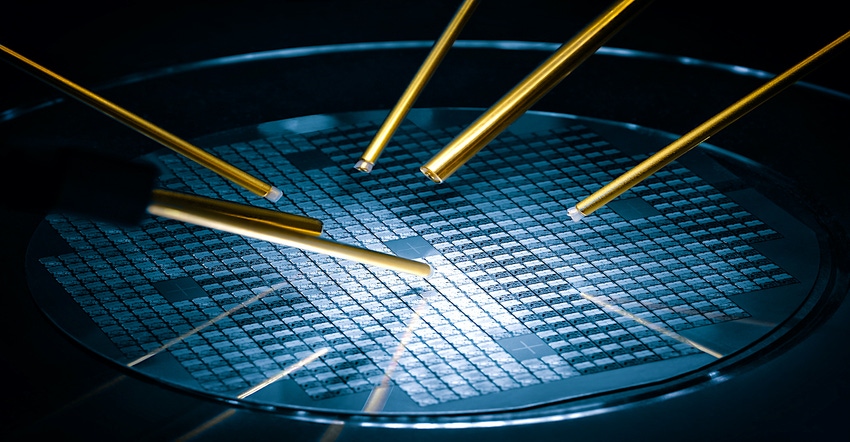

The testing and verification of semiconductor chips was a prominent topic at this year’s European Test Systems (ETS) conference, especially in the area of Design-for-Test (DFT) tools and techniques. Cadence, Siemens, and Synopsis discussed differing aspects for ongoing test challenges.

For example, a scan-based failure diagnosis tool's accuracy, resolution, and performance are extremely critical for enabling faster silicon chip testing and improving overall yields. Scan-based diagnostic methods are used to identify and locate semiconductor defects on devices that fail the manufacturing test or are returned from the field. But selecting the proper test devices for failure analysis is a challenge. To address this problem, some semiconductor manufacturers have incorporated scan diagnosis into the yield analysis process.

Most diagnosis challenges are exacerbated by ever-increasing design sizes, complex Design-for-Test (DfT) architectures, and new defect types noted Sameer Chillarige from Cadence Design Systems in his virtual ETC presentation. These challenges make the overall process much more tedious and resource-intensive. Chillarige focused on the high-level diagnostics capabilities of the Modus DfT Software Solution to provide ways to enable high accuracy, resolution, and performance during the diagnostic process.

As chip geometries have continued to shrink – even with the slowing benefits of Moore’s Law – the struggles to test and verify the designs of the chip have grown. Siemen’s Geir Eide delved into how the increasing complexity in large System on Chip (SoC) designs present specific challenges to the DfT approach, especially for traditional hierarchical DfT methods. Eide covered how Tessent Streaming Scan Network (SSN) technology helps to alleviate the trade-offs between the test implementation effort and manufacturing test cost by decoupling core-level and chip-level DfT.

Design-For-Test

Design-for-Test (DfT) is a semiconductor chip development methodology that adds testability features to a hardware product design. A DfT approach and related tools aim to ensure that the product hardware contains no manufacturing defects that could affect its functioning.

DFT techniques have been used at least since the early days of electronic data processing equipment. Early examples from the 1940s/50s used switches and instruments to “scan” (i.e., selectively probe) the voltage and current at specific internal nodes in an analog computer.

The product development cycle for today’s complex ICs is constantly shrinking. This is not a new trend. The challenge is to ensure that the increased cost to test a transistor (or register) is not greater than the cost to manufacture it. One way to address this cost imbalance is to ensure that test is considered a design requirement—especially a manufacturing test. This is the basic idea behind the popular design-for-test (DFT) approach to IC development.

One way to perform such tests is to embed scan chains into silicon. But such scan chains require automatic test equipment (ATE) to conduct the tests with the chains. So, it should be no surprise that EDA test tool vendors have worked very closely with ATE providers to handle scan-based test patterns efficiently and to minimize the test application time for scan patterns. In this way, DfT approaches cut the cost of tests by reducing the overall numbers of test patterns needed to verify the “goodness” of a chip.

The automatic-test-program-generator (ATPG) software generates test vectors for the ATE. Thus, increases in chip complexity correspond directly to rises in the number of test patterns required to ensure an equivalent level of testing.

Testing for Multi-Chip Modules

The last presentation at this year’s ETC focused on the actual process to test multi-chip modules (MCMs). An MCM is a popular way to combine multiple integrated circuits (IC) chips, semiconductor dies, and other discrete components onto a unifying substrate. Thus, the entire module can be treated as one larger IC. Using MCM packaging allows a manufacturer to use multiple components for modularity and/or to improve yields over a conventional monolithic IC approach.

But the benefits of an MCM can be outweighed if the package fails late in the manufacturing process during final integrated testing. As Guy Cortez from Synopsys pointed out in his presentation, such failures result in the opportunity to sell these chips and make it impossible to recoup the high costs associated with the testing and packaging that went into the manufacturing of these failed MCM chips.

However, using data analytics throughout the various manufacturing test stages can help find the source of the problem earlier in the manufacturing cycle. Cortex introduced specific analytical methods such as data feed backward and data feed-forward that enabled test engineers to perform fast root cause analysis and find the source of where the issue originated. Test engineers could then further incorporate preventative measures to bin out suspected die earlier in the process, preventing costly failures downstream.

As chips continue to grow in design complexity, so too do the test and manufacturing activities. Fortunately, the world of EDA tools is on top of the entire chip development process.

John Blyler is a Design News senior editor, covering the electronics and advanced manufacturing spaces. With a BS in Engineering Physics and an MS in Electrical Engineering, he has years of hardware-software-network systems experience as an editor and engineer within the advanced manufacturing, IoT and semiconductor industries. John has co-authored books related to system engineering and electronics for IEEE, Wiley, and Elsevier.

About the Author(s)

You May Also Like