How much time do you spend debugging?

March 19, 2021

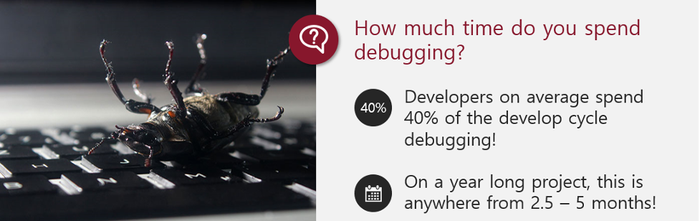

One of the greatest development challenges facing embedded software developers is the amount of time that they spend debugging their systems. On average, developers spend 40 percent of their time debugging!

Now that is an average, when I ask during a conference talk “How much time do you spend debugging?”, I find that 40 percent seems to match survey results but that is just an average. There are also developers who spend as much as 80 percent of their time debugging!

For an average 12-month long project, if you are an average developer who spends 40 percent of their time debugging, you spend almost 5 months every year debugging! This is absolute insanity considering that debugging is really failure work, that is, fixing something that was done incorrectly the first time.

We are going to explore the mindset of bugs and start defining how faults, errors, and bugs are related.

Bugs are a figment of your imagination

When it comes to defining what a bug is, let’s just dispose of the niceties and rip the band-aid right off. A bug is a mistake or an error that is injected into a system by a developer due to misunderstanding a spec, not understanding hardware, or through general carelessness. Ouch!

That really hurts but the truth is, bugs don’t just crawl up into our code and systems, they are mistakes caused by an engineer who shifts the responsibility from themselves to an imaginary bug that stood up and crawled into the system and caused a problem.

Software bugs are inevitable, we are only human, and we can’t be perfect. The systems we build are complex and there is no way we are going to get it right every time the first time. I’ll be the first to say that I used to be one of those people who raised their hand saying I spend 80 percent of my time debugging.

Today, I spend well less than 20 percent and that is because I’ve changed my mindset on what a bug is, taken responsibility for them, and changed the way I program to minimize bugs. The result has obviously been increased productivity, less time spent redoing things, and fewer missed deadlines. Bugs are a pretty wide and generic term, so let’s stop thinking about bugs and consider instead errors and defects.

Defining errors and defects

An error is a mistake made by a programmer in implementing the software design. The error could come about from a misunderstanding in a specification or a requirement. Errors can be prevented by performing reviews and through conversations with stakeholders to ensure a proper understanding of the design.

A defect on the other hand is a mistake that results from unanticipated interactions or behaviors that occur when implementing the software. Defects can be managed in many ways including using test harnesses, test-driven development, integration testing, and so forth. Sometimes you just don’t know what you don’t know until you write some code and discover that your assumptions were wrong.

I think it is important for developers to recognize that we are responsible for preventing errors and defects and not just removing them. We can save ourselves a lot of pain and stress and decrease development times and costs by preventing defects and errors in the first place.

If we can’t prevent them, then we at least want to find them as quickly as possible. The longer a bug sits in the code, the longer it will take to find, understand, and resolve.

The Defect Management Pyramid

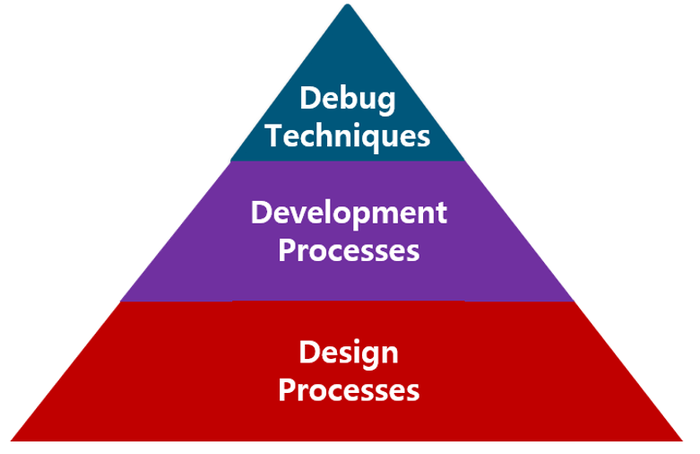

Preventing errors and defects comes down to properly addressing the defect management pyramid which you can see below:

The base of this pyramid is design processes. These are the techniques we use to ensure that our design is solid and that we are properly translating requirements into a design that can be built and meet the systems and users’ needs. Spending time here to make sure we get things right forms our foundation for minimizing defects and errors.

Next, we have our development processes. These are the techniques we use to develop our software. Are we using techniques like test-driven development to create tests that drive our software implementation and build automated test harnesses to detect issues? Are we writing modular code? Are we commenting on our code so that we can understand our design decisions?

Even following best practices and coding standards can all fit within this area and help to ensure we minimize defects and errors.

Finally, the top of the pyramid is debugging techniques. Debugging techniques are things like using a trace analyzer to monitor code execution. Using assertions, breakpoints, printf statements, statistical analysis, stack analysis, and so forth.

These are the techniques that once we have a bug help us to find it. These are very important, but if we can prevent the defects and errors from ever occurring in the first place, then we don’t have to rely on these to get us to production.

The problem that I see most often is that we don’t rely on the defect management pyramid to minimize bugs and defects. In fact, many teams have this pyramid, but it is completely flipped upside down! We rely on debugging techniques the most to manage our bugs and then development processes and then finally design.

This is where developers are wasting on average 40 percent of their time! We need to flip the pyramid so that it is right-side-up and start focusing on the design and development processes that prevent defects and errors in the first place.

Conclusions

Developers have become too comfortable with blaming bugs on all the time that they spend debugging. Today we have discussed that we need to change our mindset and forget about bugs and introduce defects and errors into our language so that we take responsibility for our “bugs”.

We have also seen that we can leverage the defect management pyramid to guide us on where we should be focusing our efforts if we would like to spend less time troubleshooting and more time innovating.

Jacob Beningo is an embedded software consultant who currently works with clients in more than a dozen countries to dramatically transform their businesses by improving product quality, cost and time to market. He has published more than 200 articles on embedded software development techniques, is a sought-after speaker and technical trainer, and holds three degrees which include a Master of Engineering from the University of Michigan. Feel free to contact him at [email protected], at his website www.beningo.com, and sign-up for his monthly Embedded Bytes Newsletter.

About the Author(s)

You May Also Like