Think robots can't manipulate human behavior? Think again.

October 28, 2020

Several years ago – shortly after Softbank took control of Arm – the SoftBank Robotics America company created and deployed “Pepper.” This four-foot-tall robot came equipped with artificial intelligence and facial recognition software so it could understand human feelings and interact with passengers at airports. To convince humans that it understood their needs, Pepper used it built-in 20 plus motors and actuators to create meaningful gestures, move its head and arms, walk towards or away from people, shake their hand, and most importantly, take food orders and bring beers to customers.

|

Friendly Pepper used to increase sales. |

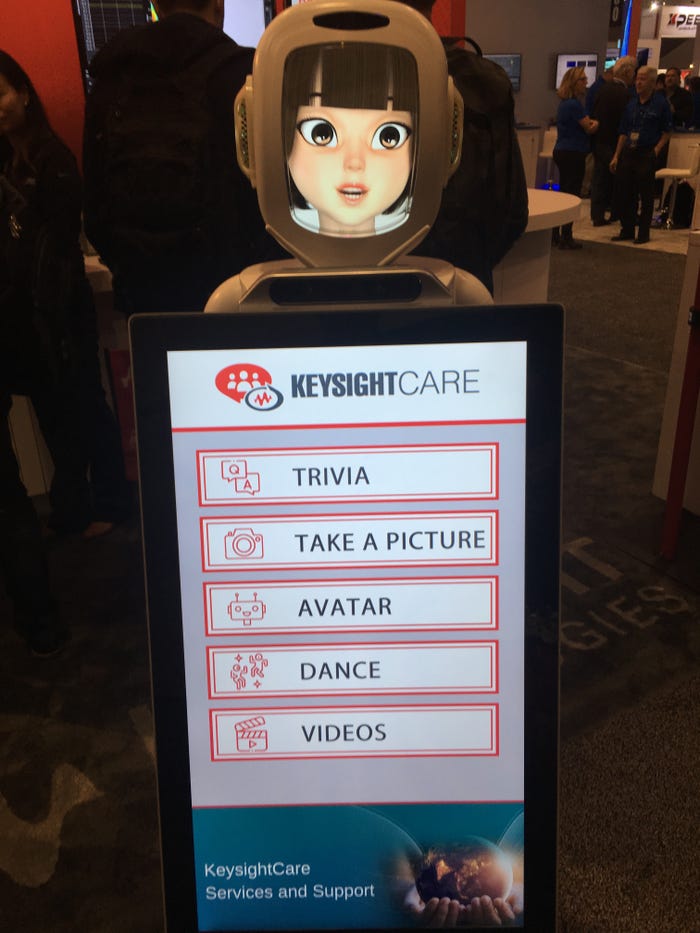

This is the way that many of us are beginning to interact with humanoid robots in our personal and professional lives. Robots similar to Pepper were common-place (before COVID-19) at technical conferences. For example, a “care-bot” was used at the Keysight booth to greet attendees, take their picture,s and even dance. True, this particular robot didn’t rely on AI but instead was controlled with a joystick. Still, it did provide a realistic expectation of the capabilities of robots in the very near future.

|

Care-Bot at DesignCon 2020. |

The “care-bot” did have one unique feature over the Pepper humanoid, namely, an anime-type face on the head display. This is important for one simple reason. Human behavior can be easily manipulated by this particular type of robot. Studies have clearly shown that humans are emotionally swayed by animated characters in movies. Disney animators, experts in the art of creating the illusion of life, have shown this to be true time and time again. Disney’s book reveals many of the tricks and techniques that can be used to manipulate an animated image to create the illusion of life and the desired emotional reaction.

These same techniques are being used in physical robots. When non-technical people think of real-world robots, they may envision helpful and simplistic ones bringing them beer to the table or greeting them at various public events. But engineers and tech professionals know that the AI behind the robots is anything but simple. It has the capability of doing great good or evil for humankind behind a friendly exterior.

This was roughly the essence of a talk at the recent Arm DevSummit. Andra Keay, Managing Director of Silicon Valley Robotics, shared her insights in a presentation entitled, “AI’s Effect on The Five Laws of Robotics.” She explained that, since Asimov's Three (plus 0) Laws of Robots, there have been hundreds of ethical guidelines developed by well-meaning groups and global initiatives. These laws have been updated to the Five Laws of Robotics from the Engineering and Physical Sciences Research Council (EPSRC) Principles of Robotics, which she referred to during her talk.

Keay’s presentation during the summit was fascinating and well worth watching. For me, the takeaway centered on how all of us can – and really must – shape the formation of ethical AI in robots both simple but especially for the ones being built to solve global problems. She concluded her talk with these five positive steps that all of us can insist upon to ensure a brighter future:

Step 1: Develop a Robot Registry (and not just for drones).

This is an extension of EPSRC’s Fifth Law of Robots, i.e., Robots should be identifiable, as should the person responsible for any robot. The identity of all robots should be easily visible in the same way done for aircraft, boats, vehicles, and the like, suggested Keay.

Step 2: Create algorithm transparency.

Perhaps the best example of this is found in Google’s idea of model cards, which is a schema for benchmark an algorithm and explaining it in terms that a policymaker or a non-expert could understand. The goal is to create a way to openly discuss known issues or dangers within an algorithm, explained Keay. Standards would typically fill this role from a technical standpoint. Unfortunately, standards are slow as they typically must wait until a new technology is somewhat mature.

Step 3: Create ethical independent reviews.

This step can serve to bridge that gap while standards are being formed. A good example of successful ethical reviews are found in the fields of bioethics and medicine, noted Keay.

Step 4: Establish robot umbud people.

Keay proposed that we have robot ombuds people, i.e., arbitrators or referees. They might even be called owls after Athena’s Owl of Wisdom who hears, sees, and conveys all things going on in the world to the goddess. Robot ombuds people could listen to people's concerns about robots and particularly channel information from communities that are underrepresented to developers.

Step 5: Silicon Valley Robotics (SVR) Industry Awards

Her last recommendation was meant as a way to reward corporate behavior in robotic AI development. The SVR group has launched an inaugural innovation and commercialization awards for this year, which focuses on awarding people for building good robots and for being good robot companies.

The responsibility for guiding the future behavior of robots (and humans) lies with all of us. Even ordinary people can shape the future through their economic choices as consumers, explained Keay. It’s certainly worth the effort to avoid the darkly comical “kill all humans” scenario from Futurama.

John Blyler is a Design News senior editor, covering the electronics and advanced manufacturing spaces. With a BS in Engineering Physics and an MS in Electrical Engineering, he has years of hardware-software-network systems experience as an editor and engineer within the advanced manufacturing, IoT and semiconductor industries. John has co-authored books related to system engineering and electronics for IEEE, Wiley, and Elsevier.

About the Author(s)

You May Also Like