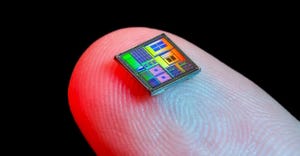

Wireless chargers involve tradeoffs.

Batteries/Energy Storage

What You Should Know About Wireless ChargingWhat You Should Know About Wireless Charging

This video says the convenience of being able to charge your devices without wires comes with tradeoffs.

Sign up for the Design News Daily newsletter.

.jpg?width=300&auto=webp&quality=80&disable=upscale)