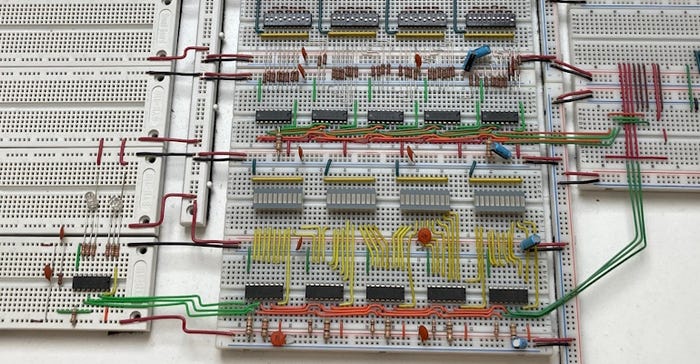

breadboards

Components & Subsystems

Ode to Bodacious Breadboards, Part 2Ode to Bodacious Breadboards, Part 2

There’s always something new to learn, even with something simple like breadboards.

Sign up for the Design News Daily newsletter.

There’s always something new to learn, even with something simple like breadboards.