Doubling performance every year is now the benchmark for quantum computers as designers look to EDA vendors for new automation tools.

January 16, 2020

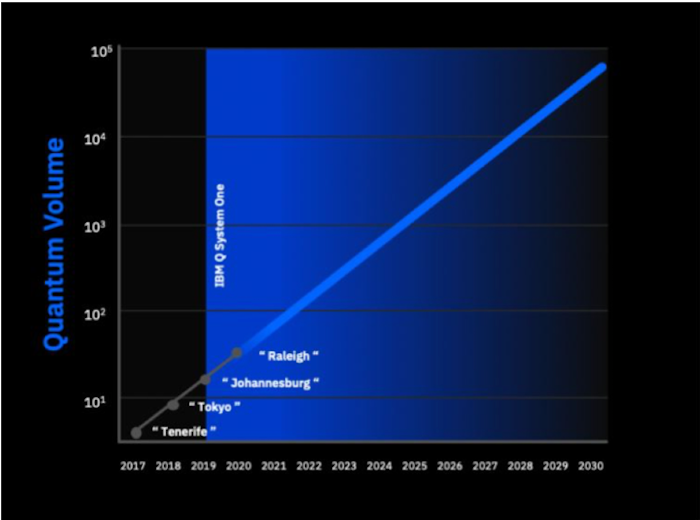

This week at CES, IBM announced that its newest quantum computer, Raleigh, doubled its Quantum Volume (QV). This is important because the QV is a measure of the increasing capability of quantum computers solve of complex, real-world problems. But how does an increase in QV relate to existing measures such as semiconductor performance as dictated by Moore’s Law? Before answering that question, it’s necessary to understand what is really meant by a Quantum Volume.

QV is a hardware-agnostic metric that IBM defined to measure the performance of quantum computers. It serves as a benchmark to the progress being made by quantum computers to solve real-world problems.

QV takes into account a number of factors effecting quantum computations including qubits, connectivity, and gate and measurement errors. Material improvements to underlying physical hardware, such as increases in coherence times, reduction of device crosstalk, and software circuit compiler efficiency, can point to measurable progress in Quantum Volume, as long as all improvements happen at a similar pace, details the IBM website.

Raleigh reached a Quantum Volume of 32 this year, up from 16 last year. This improvement stems from an improved hexagonal lattice connectivity structure with improved coherence aspects. According to IBM, the lattice connectivity had an impact on reduced gate errors and exposure to crosstalk.

Over the last year, a number of quantum computing achievements have been reached, notes IBM. Among the highlights was the offering of quantum computing services by a number of traditional cloud providers. Naturally, IBM was on that list. Other notables were Amazon, which in December 2019 first offered select enterprise customers the ability to experiment with quantum-computing services over the cloud.

The Amazon platform will let clients explore different ways to benefit from quantum computers by developing and testing quantum algorithms in simulations. For example, quantum computers could be used for simulating climate change, solving optimization problems, cybersecurity and quantum chemistry, among others. Clients will also have access to early-stage quantum-computing hardware from providers including D-Wave Systems Inc., IonQ Inc. and Rigetti Computing.

Now let’s see have the Quantum Volume measurement relates to transistor performance as delineated by Moore’s Law.

|

Image Source: IBM / Quantum Volume Growth Chart |

Quantum Volume and Moore’s Law

IBM has doubled the power of its quantum computers annually since 2017. The company first made quantum computers available to the public in May, 2016 through its IBM Q Experience quantum cloud service.

As mentioned earlier, the Quantum Volume is a basic performance metric that measures progress toward the Quantum Advantage, a term IBM uses to describe the point at which quantum applications deliver a significant, practical benefit beyond those of classical semiconductor computers. Potential use cases, such as precisely simulating battery-cell chemistry for electric vehicles, delivering faster derivative pricing in business markets, and many others are already being investigated by IBM Q Network partners. To achieve Quantum Advantage in the 2020s, IBM believes that we will need to continue to at least double Quantum Volume every year.

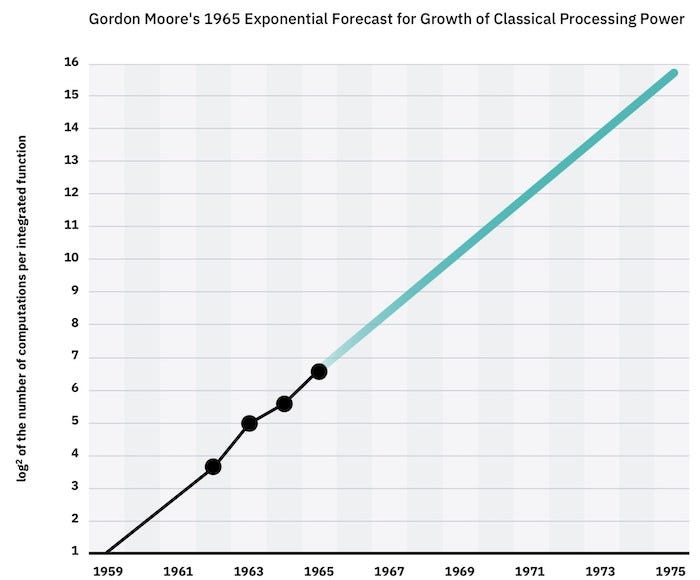

The phrase “doubling every year” is very familiar to anyone in the semiconductor and EDA chip design tool markets. As cited in a recent IBM press release, Gordon Moore postulated in 1965 that the number of components per integrated functions would grow exponentially for semiconductor computers.

Since 2017, IBM quantum technology progress shows a similar early growth pattern to Moore’s Law on transistor scaling, thus supporting the premise that Quantum Volume will need to double every year as well. This doubling can be taken as a roadmap toward achieving Quantum Advantage.

"Today, we are proposing a roadmap for quantum computing, as our IBM Q team is committed to reaching a point where quantum computation will provide a real impact on science and business," said Dr. Sarah Sheldon, lead of the IBM Q Quantum Performance team, dedicated to quantum verification at IBM Research. "While we are making scientific breakthroughs and pursuing early use cases for quantum computing, our goal is to continue to drive higher quantum volume to ultimately demonstrate quantum advantage."

While having a roadmap is a good first step, it doesn’t deal with the challenge of programming quantum computers or what tool sets will be needed to make quantum hardware.

|

Image Source: IBM / Quantum Volume / Moore’s Law |

Quantum Computing Tools

The latest IBM Quantum Volume benchmark shows that quantum computing is well on its way to simulate Moore’s Law in the doubling of capabilities every year. This realization has put pressure on semiconductor EDA tools vendors to start developing sophisticated tools for quantum computing.

This “pressure” is not necessarily a bad thing as EDA tool vendors are currently wrestling with a potential slowdown in Moore’s Law for many market segments. This slow-down at lower nodes is the of result increasing complexity and cost. Additionally, the demand for lower node designs is not as great as several markets - like IOT – which are easily satisfied with higher node (not leading edge) tools and processes.

Existing silicon chips and technology will not be replaced anytime soon by quantum computers. Rather, quantum computers will be but one of many computing technology options for the future. It may well be that quantum computing chips will exist next to microprocessors, graphic processing units (GPU), accelerators and other processors within a single computer or server.

Still, EDA tool vendors are in the best position to develop automation tools for creating quantum computers. But first they must figure out what type of quantum computers could be designed with existing chip tools and also fabricated in existing foundries. A profitable business case must be developed that supports these two tasks.

Aside from a profitable business case, automation tools won’t really be needed until it becomes too difficult to design quantum computers by hand. During a panel on future innovations at last year’s Design Automation Conference (DAC), IBM’s Rasit Onur Topaloglu explained that this very issue arose early at IBM, namely, the point at which automation tools will be needed to design quantum computers.

“We’ve concluded that up until 200 qubits, maybe we can still do it manually,” noted Topaloglu. “I am not going to project when we will reach 200 qubits, but we already have an 80 qubits architecture.” He went on to explain that, once quantum computing takes off, automation tools will be needed right away.

|

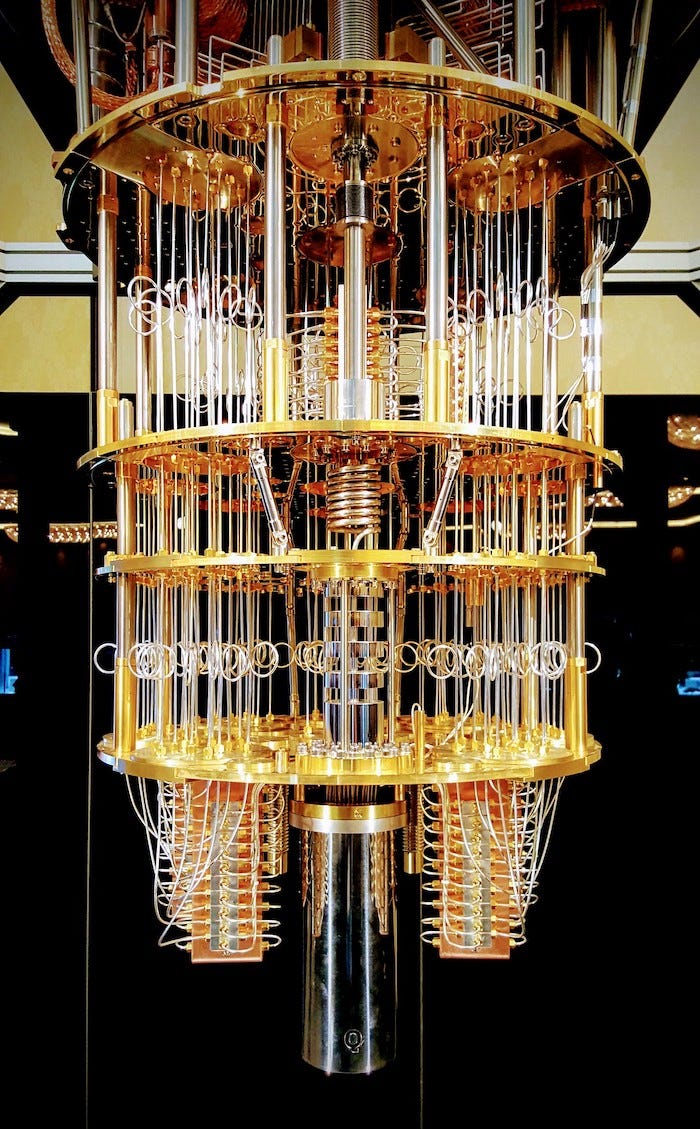

Image Source: Flickr / Creative Commons / Lars Plougmann |

RELATED ARTICLES:

John Blyler is a Design News senior editor, covering the electronics and advanced manufacturing spaces. With a BS in Engineering Physics and an MS in Electrical Engineering, he has years of hardware-software-network systems experience as an editor and engineer within the advanced manufacturing, IoT and semiconductor industries. John has co-authored books related to system engineering and electronics for IEEE, Wiley, and Elsevier.

About the Author(s)

You May Also Like